Revolutionizing AI: The Rise of Tsetlin Machines

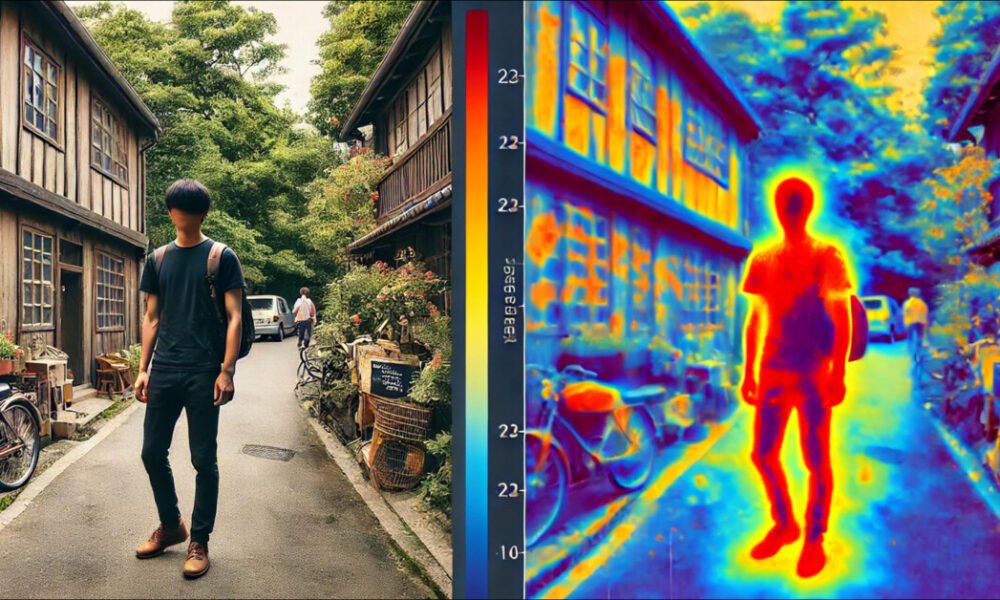

The unprecedented growth of Artificial Intelligence has given rise to a pressing issue of energy consumption. Modern AI models, particularly those based on deep learning and neural networks, are power-hungry beasts that pose a significant environmental threat. As AI becomes more integrated into our daily lives, the need to reduce its energy footprint becomes a critical environmental priority.

Introducing the Tsetlin Machine: A Solution for Sustainable AI

The Tsetlin Machine offers a promising solution to the energy crisis in AI. Unlike traditional neural networks, Tsetlin Machines operate on a rule-based approach that is simpler, more interpretable, and significantly reduces energy consumption. This innovative methodology redefines learning and decision-making processes in AI, paving the way for a more sustainable future.

Unraveling the Tsetlin Machine: A Paradigm Shift in AI

Tsetlin Machines operate on a principle of reinforcement learning, using Tsetlin Automata to adjust their internal states based on environmental feedback. This approach enables the machines to make decisions by creating clear, human-readable rules as they learn, setting them apart from the "black box" nature of neural networks. Recent advancements, such as deterministic state jumps, have further enhanced the efficiency of Tsetlin Machines, making them faster, more responsive, and energy-efficient.

Navigating the Energy Challenge in AI with Tsetlin Machines

The exponential growth of AI has led to a surge in energy consumption, mainly driven by the training and deployment of energy-intensive deep learning models. The environmental impact of training a single AI model is significant, emitting as much CO₂ as five cars over their lifetimes. This underscores the urgency of developing energy-efficient AI models like the Tsetlin Machine that strike a balance between performance and sustainability.

The Energy-Efficient Alternative: Tsetlin Machines vs. Neural Networks

In a comparative analysis, Tsetlin Machines have proven to be up to 10,000 times more energy-efficient than neural networks. Their lightweight binary operations reduce computational burden, enabling them to match the accuracy of traditional models while consuming only a fraction of the power. Tsetlin Machines excel in energy-constrained environments and are designed to operate efficiently on standard, low-power hardware, minimizing the overall energy footprint of AI operations.

Tsetlin Machines: Transforming the Energy Sector

Tsetlin Machines have revolutionized the energy sector, offering critical applications in smart grids, predictive maintenance, and renewable energy management. Their ability to optimize energy distribution, predict demand, and forecast energy needs has made them indispensable in creating a more sustainable and efficient energy grid. From preventing costly outages to extending the lifespan of equipment, Tsetlin Machines are driving a greener future in the energy sector.

Innovations and Advancements in Tsetlin Machine Research

Recent advancements in Tsetlin Machine research have paved the way for improved performance and efficiency. Innovations such as multi-step finite-state automata and deterministic state changes have made Tsetlin Machines increasingly competitive with traditional AI models, particularly in scenarios where low power consumption is a priority. These developments continue to redefine the landscape of AI, offering a sustainable path forward for advanced technology.

Embracing Tsetlin Machines: Pioneering Sustainability in Technology

The Tsetlin Machine represents more than just a new AI model; it signifies a paradigm shift towards sustainability in technology. By focusing on simplicity and energy efficiency, Tsetlin Machines challenge the notion that powerful AI must come at a high environmental cost. Embracing Tsetlin Machines offers a path forward where technology and environmental responsibility coexist harmoniously, shaping a greener and more innovative world.

-

What is the Tsetlin Machine and how does it reduce energy consumption?

The Tsetlin Machine is a new type of AI technology that uses a simplified algorithm to make decisions with high accuracy. By simplifying the decision-making process, the Tsetlin Machine requires less computational power and therefore reduces energy consumption compared to traditional AI models. -

How does the Tsetlin Machine compare to other AI models in terms of energy efficiency?

Studies have shown that the Tsetlin Machine consumes significantly less energy than other AI models, such as deep learning neural networks. This is due to its simplified decision-making process, which requires fewer computations and therefore less energy. -

Can the Tsetlin Machine be applied to different industries to reduce energy consumption?

Yes, the Tsetlin Machine has the potential to be applied to a wide range of industries, including healthcare, finance, and transportation, to reduce energy consumption in AI applications. Its energy efficiency makes it an attractive option for companies looking to reduce their carbon footprint. -

What are the potential cost savings associated with using the Tsetlin Machine for AI applications?

By reducing energy consumption, companies can save on electricity costs associated with running AI models. Additionally, the simplified algorithm of the Tsetlin Machine can lead to faster decision-making, potentially increasing productivity and reducing labor costs. - Are there any limitations to using the Tsetlin Machine for AI applications?

While the Tsetlin Machine offers significant energy savings compared to traditional AI models, it may not be suitable for all use cases. Its simplified decision-making process may not be as effective for complex tasks that require deep learning capabilities. However, for many applications, the Tsetlin Machine can be a game-changer in terms of reducing energy consumption.