Understanding the Evolving Language of Artificial Intelligence

Artificial intelligence is transforming our world and creating a new lexicon to describe its impact. Within just five minutes of delving into AI, you’ll encounter terms like LLMs, RAG, RLHF, and many more, which can leave even the most knowledgeable tech professionals feeling perplexed. This glossary aims to demystify that jargon and will be updated frequently to remain relevant, much like the AI systems it refers to.

What Is Artificial General Intelligence (AGI)?

Artificial general intelligence, or AGI, is a vaguely defined concept, usually indicating AI that surpasses the average human in nearly all tasks. Sam Altman, CEO of OpenAI, likens AGI to a “median human you could hire as a co-worker.” OpenAI’s charter describes AGI as “highly autonomous systems that outperform humans in most economically valuable work,” while Google DeepMind views it as AI that matches human capability in cognitive tasks. Confused? You’re not alone—experts in AI research are still grappling with its meaning.

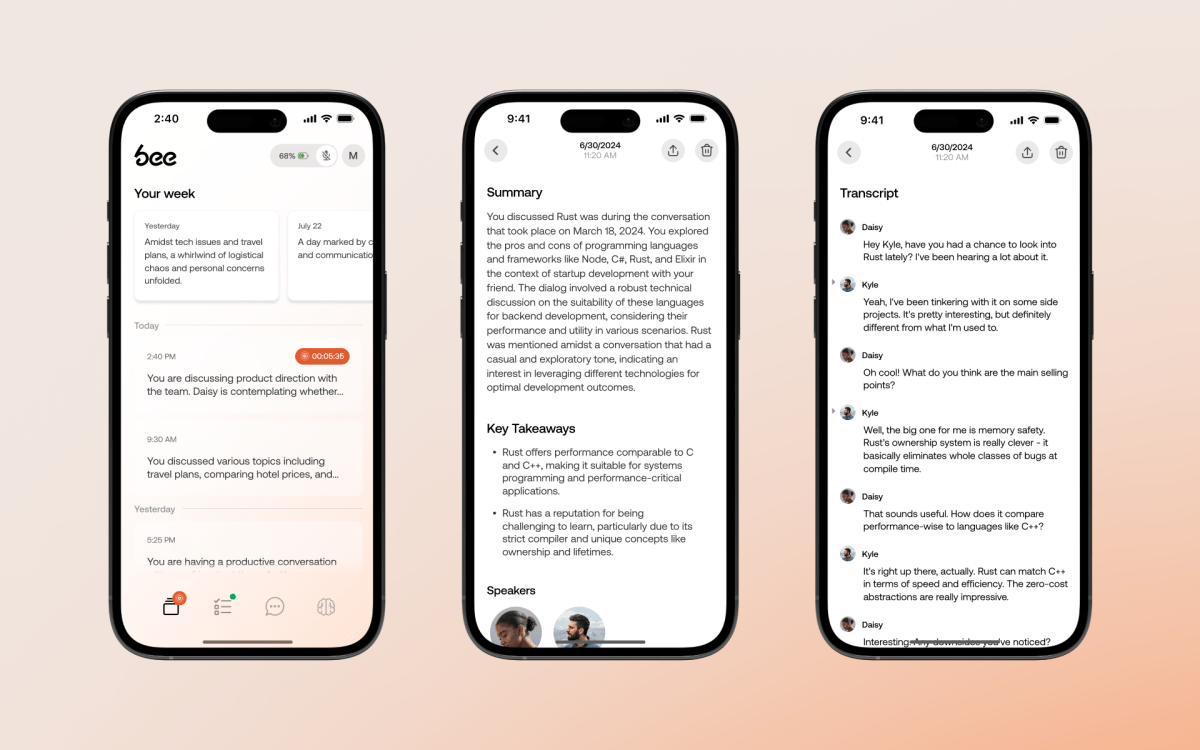

Defining AI Agents

An AI agent goes beyond basic chatbots by using AI technologies to accomplish tasks like filing expenses, booking flights, or even writing code. However, the term “AI agent” can vary in meaning depending on context, as the field is still developing. At its core, an AI agent is an autonomous system capable of performing multi-step tasks by utilizing various AI systems.

Anatomy of API Endpoints

Think of API endpoints as invisible “buttons” within software that other applications can press to execute functions. Developers utilize these interfaces to create integrations—such as enabling one application to retrieve data from another or allowing an AI agent to manage external services autonomously, without human intervention. As AI agents become more advanced, they can discover and leverage these endpoints, opening doors to new automation possibilities.

Chain-of-Thought Reasoning

In human cognition, simple questions often yield quick answers, but more complex problems may require writing down intermediary steps—like solving how a farmer has chickens and cows with a specific number of heads and legs. In AI, chain-of-thought reasoning involves decomposing a problem into smaller steps to improve the final outcome. While this may prolong response time, it enhances accuracy, especially in logic or programming cases, thanks to reinforcement learning.

(See: Large Language Model)

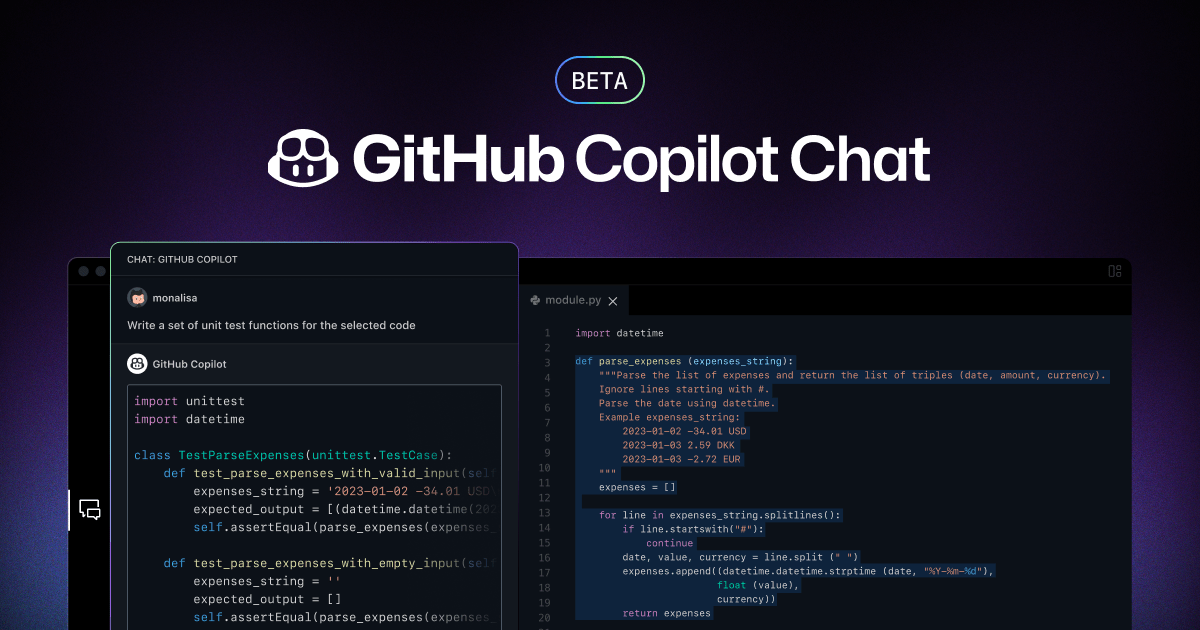

The Role of Coding Agents

A coding agent is a specialized AI tool that can autonomously write, test, and debug code, making it akin to a tireless intern. Unlike simple coding suggestions for human review, coding agents handle iterative, trial-and-error tasks efficiently across entire codebases, identifying bugs and implementing fixes with limited oversight.

Understanding Compute

In AI, “compute” refers to the computational power essential for running advanced AI models. This involves hardware like GPUs and CPUs, serving as the backbone of AI’s ability to train and deploy models effectively.

Deep Learning Explained

Deep learning refers to a subset of machine learning using multi-layered artificial neural networks (ANNs) to make complex correlations in data. These algorithms, inspired by the human brain’s neural pathways, can identify critical features without requiring manual definition. However, deep learning demands vast amounts of data to yield accurate results and takes longer to train compared to simpler models.

(See: Neural Network)

The Impact of Diffusion Technology

Diffusion is foundational to many AI models generating art, music, and text. It works by systematically “destroying” data structures through noise and then learning to reverse that process. This methodology allows models to recover original data from seemingly chaotic inputs.

Distillation Techniques in AI

Distillation is a process where knowledge from a larger “teacher” model aids in training a smaller “student” model, aiming for efficiency and minimal loss. This process has been employed by companies like OpenAI to enhance models like GPT-4 Turbo.

However, distillation from competitor models may breach service agreements.

Fine-Tuning for Specific Tasks

Fine-tuning is the further training of an AI model, optimizing it for specific tasks with new, specialized data. Startups frequently employ this technique to customize large language models for commercial applications.

(See: Large Language Model [LLM])

Generative Adversarial Networks (GANs)

A GAN, or Generative Adversarial Network, is a framework in machine learning that enables the effective generation of realistic data, including deepfakes. Comprising two neural networks—the generator and the discriminator—GANs engage in a competitive process to refine output quality.

What Is AI Hallucination?

In AI parlance, “hallucination” refers to instances where models generate incorrect information, a significant quality concern. Hallucinations can result from training data gaps and pose real-world risks, prompting a shift towards more specialized AI models to mitigate misinformation.

The Inference Process

Inference is the active stage of running an AI model, generating predictions based on learned patterns. Effective inference requires prior training and can be executed on various hardware, though performance will vary significantly depending on the equipment.

[See: Training]

Introduction to Large Language Models (LLMs)

Large language models, or LLMs, are the foundational technology behind popular AI assistants like ChatGPT and others. They process inputs through complex neural networks that learn language patterns from extensive text sources, generating contextually appropriate responses.

(See: Neural Network)

Memory Cache in AI Systems

Memory cache enhances inference processes by saving specific calculations for future queries, thereby improving efficiency and reducing computational load. Techniques like KV caching are instrumental in transformer models for accelerating response times.

(See: Inference)

The Role of Neural Networks

A neural network is a multi-layered algorithmic structure that drives deep learning and the explosive growth of generative AI. Initially inspired by human brain structures, the use of graphical processing hardware has unlocked new levels of performance across various applications.

(See: Large Language Model [LLM])

The Significance of Open Source

Open source refers to software and AI models whose source code is publicly accessible for modification and inspection, promoting collaborative development and ensuring transparency. Meta’s Llama models serve as a prime example, while closed-source models like OpenAI’s GPT remain proprietary.

Understanding Parallelization

Parallelization involves executing multiple tasks simultaneously, a crucial factor in efficient AI training and inference. The architecture of modern GPUs enables thousands of parallel computations, significantly boosting model development speed.

The RAMageddon Trend

RAMageddon refers to the shortage of random access memory (RAM) in the tech industry, exacerbated by the AI boom as companies compete for resources, driving up costs and stifling supply for other sectors like gaming and consumer electronics.

Exploring Recursive Self-Improvement (RSI)

Recursive self-improvement describes AI models enhancing their capabilities autonomously, a concept that teeters on the brink of transformative progress. While some view this as a potential cataclysm, many startups see it as a new frontier for research in AI development.

Reinforcement Learning Explained

Reinforcement learning trains AI through a trial-and-error model, where successes translate into rewards. This method is particularly effective for tasks like gaming and robotics, and it has become essential for refining large language models.

The Role of Tokens in Human-Machine Communication

Tokens serve as the building blocks of AI-human interaction, representing segments of data processed by large language models (LLMs). Through tokenization, AI can effectively understand and generate responses, with costs typically calculated on a per-token basis.

Understanding Token Throughput

Token throughput measures the quantity of AI work processed in a given timeframe, crucial for determining how many users can be served simultaneously and how swiftly responses are generated. Maximizing token throughput is vital for AI infrastructure optimization.

The Training Process in Machine Learning

Training an AI model involves inputting data to enable learning from patterns and generating desired outputs. Given the resource-intensive nature of training, hybrid approaches are often employed to manage costs effectively.

[See: Inference]

Leveraging Transfer Learning

Transfer learning utilizes previously trained models as a foundation for new tasks, facilitating efficiency and leveraging accumulated knowledge. While beneficial, limitations exist, necessitating additional training for task-specific performance.

(See: Fine-Tuning)

Validation Loss: A Performance Measure

Validation loss indicates how effectively an AI model is training, with lower values signifying better performance. This metric is crucial for monitoring overfitting and determining when adjustments to training processes should be made.

The Importance of Weights in AI

Weights are essential numerical parameters determining the significance of various features in an AI model’s training dataset. They influence how the model evaluates inputs and shapes the final output, evolving throughout the training process.

For example, an AI model predicting housing prices assigns weights to attributes like the number of bedrooms, affecting the predicted value based on historical data.

This article is regularly updated with new information.