iOS 27 to Offer Users Choice of AI Models on iPhone

Exciting new features are coming for iPhone users with the release of iOS 27 later this year, allowing for a customizable AI experience.

Apple’s Innovative “Extensions” Feature

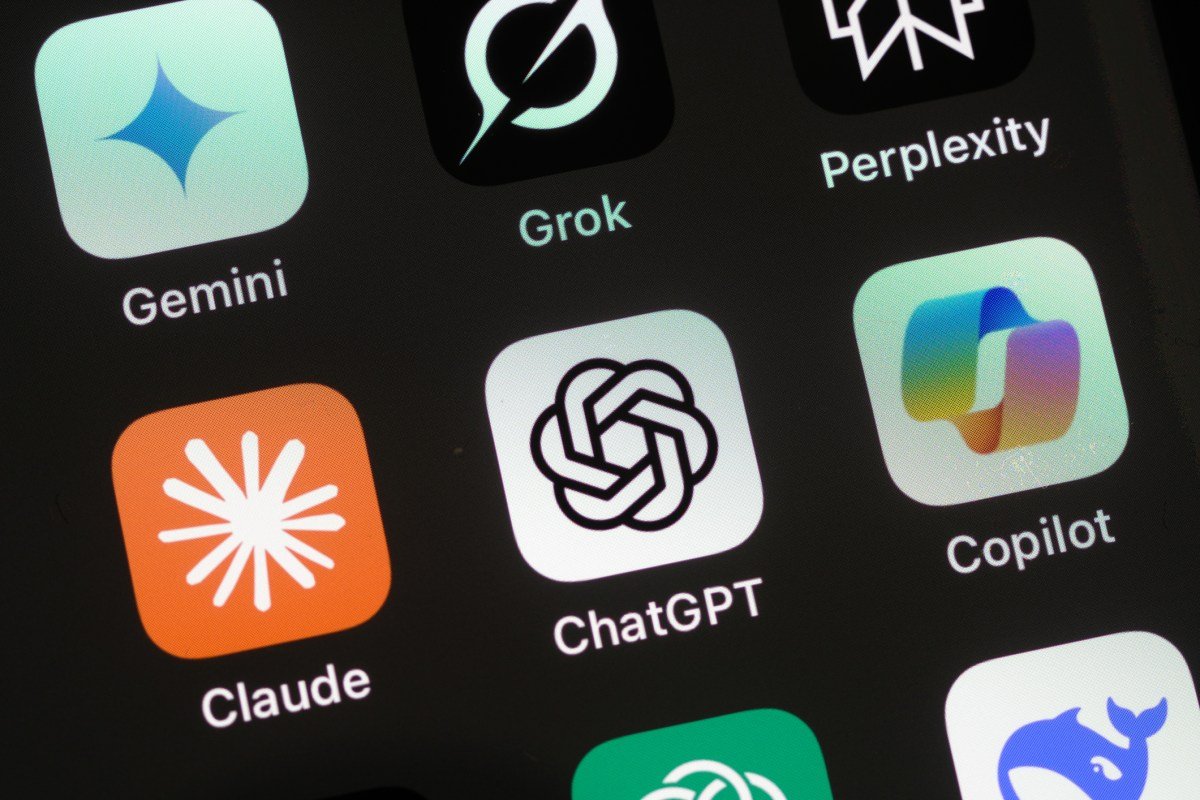

According to a Bloomberg report, Apple plans to introduce a variety of third-party large language models for seamless integration within the iPhone’s operating system. This new functionality, referred to internally as “Extensions,” will enable users to “access generative AI capabilities from installed apps on demand,” leveraging Apple Intelligence features like Siri, Writing Tools, and Image Playground, as suggested by preliminary test versions of the software.

Support for iPadOS and macOS

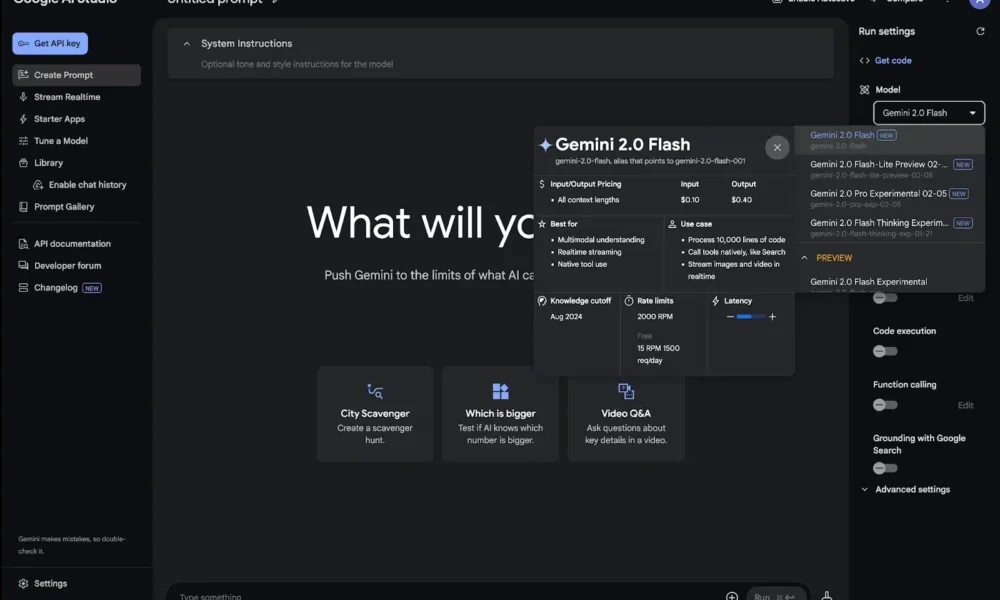

This exciting capability won’t be limited to iPhones; it will also be available on iPadOS 27 and macOS 27. Currently, models from Google and Anthropic are undergoing testing, while the status of ChatGPT remains somewhat uncertain. As the existing large language model for users, it is likely to remain a choice for integration.

Change at the Top: A New Era for Apple

As CEO Tim Cook prepares to step down, John Ternus, the incoming executive, inherits the responsibility of steering Apple’s future, particularly its AI strategies. Known for being perceived as “behind” its competitors in AI advancements, Apple’s approach seems to be leveraging existing hardware to enhance user experiences rather than solely investing in new AI services.

Revenue Generation through AI

Despite criticisms regarding its pace in AI development, Apple continues to generate substantial revenue from its AI initiatives. The future focus appears to be on transforming current technologies into AI-centric experiences for users, rather than rapidly expanding its portfolio of AI services.

Here are five FAQs regarding Apple’s plans for iOS 27 and its "Choose Your Own Adventure" approach to AI models:

FAQ 1: What does "Choose Your Own Adventure" mean in the context of iOS 27?

Answer: The "Choose Your Own Adventure" concept in iOS 27 refers to an interactive experience where users can select from various AI models to personalize their device’s functionality. This allows users to tailor recommendations, interactions, and tasks based on their preferences, enhancing user engagement and satisfaction.

FAQ 2: How will users select their preferred AI models on iOS 27?

Answer: Users will be able to choose from a variety of AI models through a user-friendly interface within the settings app. The selection process may involve a series of prompts or questionnaires to help the system understand the user’s needs better and recommend the most appropriate AI models.

FAQ 3: What benefits will this feature provide to users?

Answer: This feature empowers users by allowing them to customize their experience based on their individual requirements. Benefits include improved responsiveness, more relevant suggestions, and the ability to shift between models for different tasks, enhancing efficiency and satisfaction.

FAQ 4: Will using multiple AI models consume more battery and resources?

Answer: While using multiple AI models may have some impact on battery and resource consumption, Apple is likely to optimize system performance in iOS 27 to ensure efficient management of these resources. Users can also monitor and adjust settings to balance performance and battery life.

FAQ 5: When is the expected release date for iOS 27 featuring this AI model selection?

Answer: Apple has not officially announced a specific release date for iOS 27. However, major updates typically occur during the annual Worldwide Developers Conference (WWDC) in June, with a subsequent public release in September. Stay tuned for announcements from Apple for more detailed timelines.