Tim Cook: A 15-Year Legacy of Challenges and Triumphs at Apple

Over his 15-year tenure, Tim Cook has transformed into a highly recognizable figure in the tech world, wielding significant influence and accruing wealth estimated around $3 billion. This fortune largely stems from performance-based equity awards, coinciding with Apple’s impressive market cap growth—now over $4 trillion—during his leadership.

Navigating the Complex Landscape of Big Tech

Cook’s leadership hasn’t been devoid of challenges. He has had to navigate two administrations in the United States, each with distinctive views on Big Tech, China, and regulatory matters. From defying the FBI over encryption issues to defending the App Store against claims of monopolistic behavior, his journey has been rife with contentious moments. As he prepares to hand the reins over to incoming CEO John Ternus, these challenges will undoubtedly shape the future of Apple.

Major Battles Throughout Cook’s Tenure

Who could forget the high-profile 2016 encryption clash with the FBI? After a mass shooting in San Bernardino, the FBI sought Apple’s assistance to unlock the gunman’s iPhone. Cook stood firm, asserting that encryption is vital for protecting individual privacy and that creating a backdoor would set a perilous precedent. The confrontation concluded when the FBI discovered an alternative method, solidifying Apple’s image as a staunch advocate for privacy and entrenching Cook in a contentious relationship with global governments. Ternus will inherit not only this legacy but also the corresponding responsibilities.

The App Store’s antitrust struggles have also been formidable for Cook. Epic Games famously challenged Apple’s policy mandating the use of its in-app payment system, which includes a 30% commission on sales. While Apple achieved a partial victory in 2021, being declared not a monopoly, it was still ordered to permit developers to link to third-party payment options. Apple’s compliance was minimalist, incurring further scrutiny even as the Ninth Circuit Court of Appeals upheld a contempt ruling—resulting in Apple gearing up for a Supreme Court petition.

A Broader Antitrust Landscape

Cook’s antitrust battles go beyond Epic, with the U.S. Department of Justice filing a lawsuit against Apple in March 2024. This legal skirmish accuses Apple of unfairly maintaining dominance in the smartphone realm by constraining third-party apps and devices. A federal judge’s refusal to dismiss this case indicates a protracted legal struggle ahead. Recent developments in India, where Apple faces a potential $38 billion fine for alleged market abuses, add another layer of complexity—especially given the company’s modest market share of around 9%.

Balancing Act in China

Operating in China has become a progressively intricate balancing act for Cook. Apple’s dependence on Chinese manufacturing has deepened amidst geopolitical tensions. He made controversial concessions, such as removing VPN apps and storing user data on state-controlled servers. During the Trump administration, Cook skillfully navigated trade challenges, establishing crucial relationships that could benefit Ternus as Cook transitions to executive chairman, sharing his vast experience in this area.

The AI Challenge Ahead

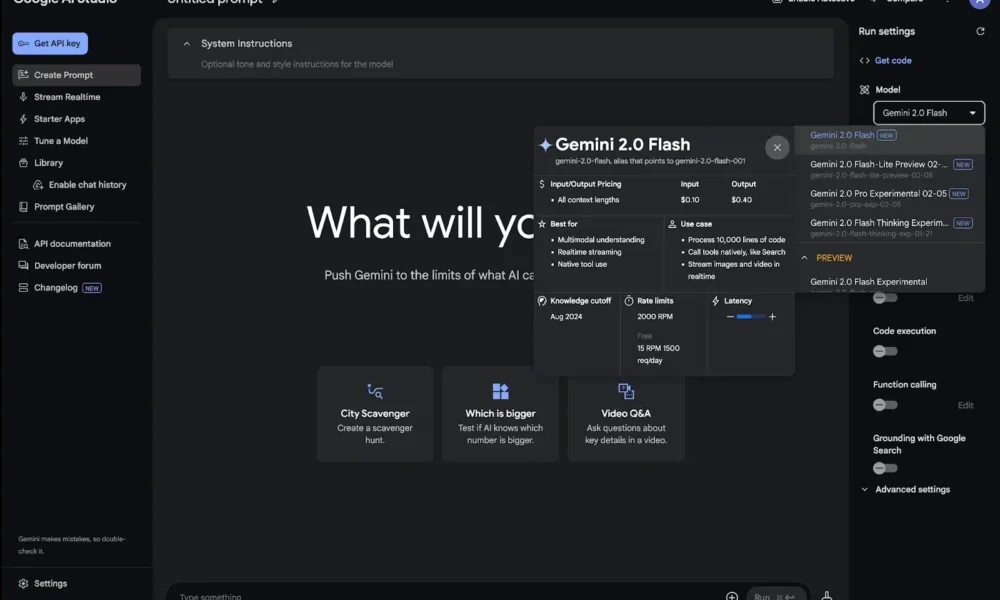

Perhaps the most pressing challenge that Ternus faces pertains to AI. Following the departure of Apple’s AI chief, John Giannandrea, the company is grappling with delays in revamping Siri and integrating advanced AI functionalities. Currently, Apple leans on industry leaders like Google’s Gemini and OpenAI’s ChatGPT for new AI features. Bob O’Donnell, a market analyst, remarked that Ternus’ primary challenge will be developing a compelling AI narrative that highlights Apple’s own capabilities.

Leadership Transition and What Lies Ahead

The recent exodus of top executives at Apple poses both a challenge and an opportunity for Ternus. He takes the helm of a restructured leadership team, which includes the departure of several key figures. Establishing his vision will be critical as he navigates these changes.

Tim Cook’s unparalleled skill lay in managing complex relationships while maintaining smooth operations. As Ternus takes over, it remains to be seen if he shares this skill, or if Cook’s continuous guidance serves to bridge any potential divides that may arise.

The Future of Apple and the App Economy

An overarching question lurks over Ternus’s tenure: could the very ecosystem that made Apple the most valuable company in the world come to an end? With predictions that AI agents may soon overshadow the App Store model, Ternus may have to navigate a rapidly evolving landscape, where innovations beyond the iPhone could reshape user interactions entirely.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

FAQs about John Ternus and His Role at Apple

1. Who is John Ternus?

John Ternus is an Apple executive who has played a significant role in the development of the company’s hardware products. He has recently been appointed to lead one of the most influential technology companies in the world.

2. What does Ternus’s new role entail?

As a leader at Apple, Ternus is responsible for overseeing product development, engineering, and innovation. His job involves navigating complex challenges in a highly competitive market, ensuring that Apple continues to deliver cutting-edge technology.

3. What challenges is Ternus likely to face in his position?

Ternus will encounter numerous challenges, including supply chain disruptions, intense competition, evolving consumer preferences, and the need for continuous innovation. Additionally, balancing product quality and market demands will be crucial.

4. How has Ternus prepared for this leadership role?

Ternus has extensive experience working on various Apple projects and has been instrumental in the success of key products. His technical knowledge, leadership skills, and familiarity with Apple’s corporate culture equip him for the challenges ahead.

5. What impact could Ternus’s leadership have on Apple?

With Ternus at the helm, Apple may continue to innovate and adapt to market changes while maintaining its reputation for quality. His leadership style and decisions could influence future product strategies and the company’s overall direction in the tech industry.