How AI is Transforming National Security: A Double-Edged Sword

Artificial intelligence is revolutionizing how nations safeguard their security. It plays a crucial role in cybersecurity, weapons innovation, border surveillance, and even shaping public discourse. While AI offers significant strategic advantages, it also poses numerous risks. This article explores the ways AI is redefining security, the current implications, and the tough questions arising from these cutting-edge technologies.

Cybersecurity: The Battle of AI Against AI

Most modern cyberattacks originate in the digital realm. Cybercriminals have evolved from crafting phishing emails by hand to leveraging language models for creating seemingly friendly and authentic messages. In a striking case from 2024, a gang employed a deepfake video of a CFO, resulting in the theft of $25 million from his company. The lifelike video was so convincing that an employee acted on the fraudulent order without hesitation. Moreover, some attackers are utilizing large language models fed with leaked resumes or LinkedIn data to tailor their phishing attempts. Certain groups even apply generative AI to unearth software vulnerabilities or craft malware snippets.

On the defensive side, security teams leverage AI to combat these threats. They feed network logs, user behavior data, and global threat reports into AI systems that learn to identify “normal” activity and flag suspicious behavior. In the event of a detected intrusion, AI tools can disconnect compromised systems, minimizing the potential for widespread damage that might occur while waiting for human intervention.

AI’s influence extends to physical warfare as well. In Ukraine, drones are equipped with onboard sensors to target fuel trucks or radar systems prior to detonation. The U.S. has deployed AI for identifying targets for airstrikes in regions including Syria. Israel’s military recently employed an AI-based targeting system to analyze thousands of aerial images for potential militant hideouts. Nations such as China, Russia, Turkey, and the U.K. are also exploring “loitering munitions” which patrol designated areas until AI identifies a target. Such technologies promise increased precision in military operations and heightened safety for personnel. However, they introduce significant ethical dilemmas: who bears responsibility when an algorithm makes an erroneous target selection? Experts warn of “flash wars” where machines react too quickly for diplomatic intervention. Calls for international regulations governing autonomous weapons are increasing, but states worry about being outpaced by adversaries if they halt development.

Surveillance and Intelligence in the AI Era

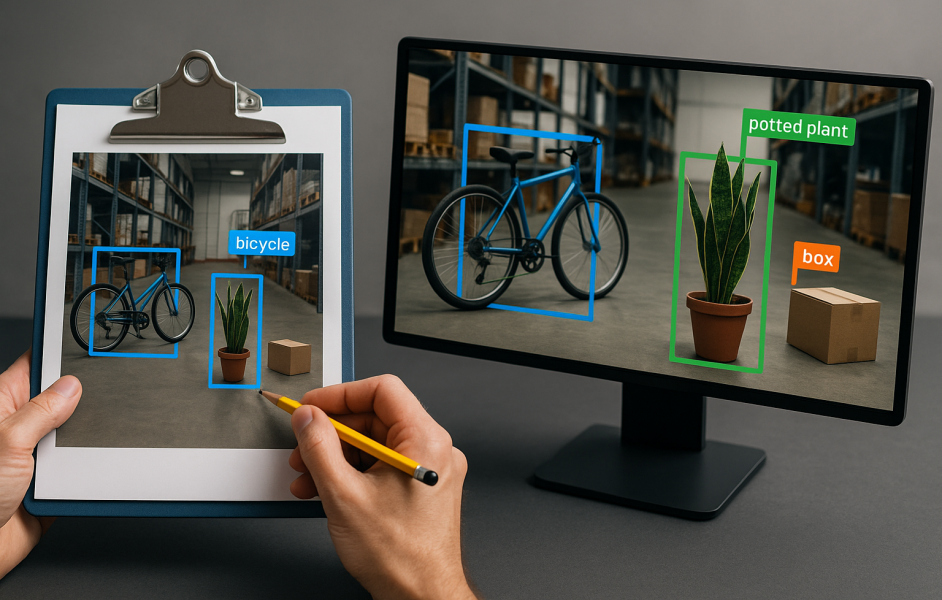

Intelligence agencies that once relied on human analysts to scrutinize reports and video feeds now depend on AI to process millions of images and messages every hour. In some regions, such as China, AI monitors citizens, tracking behaviors from minor infractions to online activities. Similarly, along the U.S.–Mexico border, advanced solar towers equipped with cameras and thermal sensors scan vast desert areas. AI distinguishes between human and animal movements, promptly alerting patrolling agents. This “virtual wall” extends surveillance capabilities beyond what human eyes can achieve alone.

Although these innovations enhance monitoring capabilities, they can also amplify mistakes. Facial recognition technologies have been shown to misidentify women and individuals with darker skin tones significantly more often than white males. A single misidentification can lead to unwarranted detention or scrutiny of innocent individuals. Policymakers are advocating for algorithm audits, clear appeals processes, and human oversight prior to any significant actions.

Modern conflicts are fought not only with missiles and code but also with narratives. In March 2024, a deepfake video depicting Ukraine’s President ordering troops to surrender circulated online before being debunked by fact-checkers. During the 2023 Israel–Hamas conflict, AI-generated misinformation favoring specific policy viewpoints inundated social media, aiming to skew public sentiment.

The rapid spread of false information often outpaces governments’ ability to respond. This is especially troublesome during elections, where AI-generated content is frequently manipulated to influence voter behavior. Voters struggle to discern between authentic and AI-crafted visuals or videos. In response, governments and technology companies are initiating counter-initiatives to scan for AI-generated signatures, yet the race remains tight; creators of misinformation are refining their methods as quickly as defenders can enhance their detection measures.

Armed forces and intelligence agencies gather extensive data, including hours of drone footage, maintenance records, satellite images, and open-source intelligence. AI facilitates this by sorting and emphasizing significant information. NATO recently adopted a system modeled after the U.S. Project Maven, integrating databases from 30 member nations to provide planners with a cohesive operational view. This system anticipates enemy movements and highlights potential supply shortages. The U.S. Special Operations Command harnesses AI to assist in drafting its annual budget by examining invoices and recommending reallocation. Similar AI platforms enable prediction of engine failures, advance scheduling of repairs, and tailored flight simulations based on individual pilots’ requirements.

AI in Law Enforcement and Border Control

Police and immigration officials are incorporating AI to manage tasks requiring constant vigilance. At bustling airports, biometric kiosks expedite traveler identification, enhancing the efficiency of the process. Pattern-recognition algorithms analyze travel histories to identify possible cases of human trafficking or drug smuggling. Notably, a 2024 partnership in Europe successfully utilized such tools to dismantle a smuggling operation transporting migrants via cargo ships. These advancements can increase border security and assist in criminal apprehension. However, they are not without challenges. Facial recognition systems may misidentify certain demographics with underrepresentation, leading to errors. Privacy concerns remain significant, prompting debates about the extent to which AI should be employed for pervasive monitoring.

The Bottom Line: Balancing AI’s Benefits and Risks

AI is dramatically reshaping national security, presenting both remarkable opportunities and considerable challenges. It enhances protection against cyber threats, sharpens military precision, and aids in decision-making. However, it also has the potential to disseminate falsehoods, invade privacy, and commit fatal errors. As AI becomes increasingly ingrained in security frameworks, we must strike a balance between leveraging its benefits and managing its risks. This will necessitate international cooperation to establish clear regulations governing the use of AI. In essence, AI remains a powerful tool; the manner in which we wield it will ultimately determine the future of security. Exercising caution and wisdom in its application will be essential to ensure that it serves to protect rather than harm.

Here are five FAQs about AI and national security, considering it as a new battlefield:

FAQ 1: How is AI changing the landscape of national security?

Answer: AI is revolutionizing national security by enabling quicker decision-making through data analysis, improving threat detection with predictive analytics, and enhancing cybersecurity measures. Defense systems are increasingly utilizing AI to analyze vast amounts of data, identify patterns, and predict potential threats, making surveillance and intelligence operations more efficient.

FAQ 2: What are the ethical concerns surrounding AI in military applications?

Answer: Ethical concerns include the potential for biased algorithms leading to unjust targeting, the risk of autonomous weapons making life-and-death decisions without human oversight, and the impacts of AI-driven warfare on civilian populations. Ensuring accountability, transparency, and adherence to humanitarian laws is crucial as nations navigate these technologies.

FAQ 3: How does AI improve cybersecurity in national defense?

Answer: AI enhances cybersecurity by employing machine learning algorithms to detect anomalies and threats in real time, automating responses to cyber attacks, and predicting vulnerabilities before they can be exploited. This proactive approach allows national defense systems to stay ahead of potential cyber threats and secure sensitive data more effectively.

FAQ 4: What role does AI play in intelligence gathering?

Answer: AI assists in intelligence gathering by processing and analyzing vast amounts of data from diverse sources, such as social media, satellite imagery, and surveillance feeds. It identifies trends, assesses risks, and generates actionable insights, providing intelligence agencies with a more comprehensive picture of potential threats and aiding in strategic planning.

FAQ 5: Can AI exacerbate international tensions?

Answer: Yes, the deployment of AI in military contexts can escalate international tensions. Nations may engage in an arms race to develop advanced AI applications, potentially leading to misunderstandings or conflicts. The lack of global regulatory frameworks to govern AI in military applications increases the risk of miscalculations and misinterpretations among nation-states.