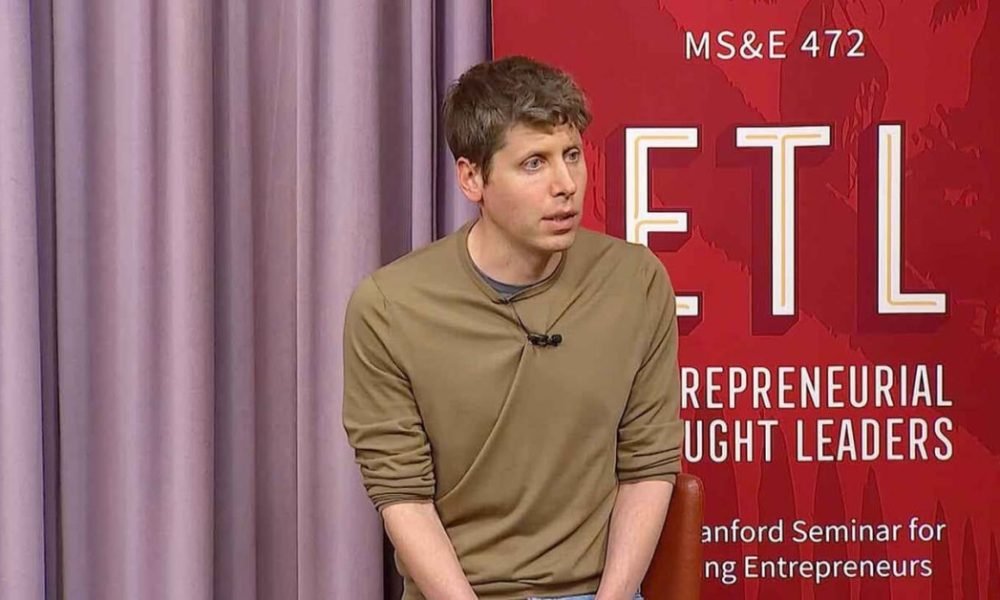

Sam Altman Responds to Home Attack and Trust Issues Amidst New Yorker Profile

OpenAI CEO Sam Altman shared a blog post on Friday, addressing an alarming incident at his residence and the fallout from a recent New Yorker profile questioning his integrity.

Incident at Altman’s Home

In the early hours of Friday, a Molotov cocktail was reportedly thrown at Altman’s home in San Francisco. Thankfully, no one was injured. The suspect was later apprehended at OpenAI’s headquarters, where he threatened to burn down the building, according to the SF Police Department reports.

Connection to Recent Media Scrutiny

Although the police have not publicly named the suspect, Altman indicated that the attack occurred shortly after the publication of “an incendiary article” about him. He reflected that the article, released during a period of heightened anxiety around AI, might have exacerbated risks to his safety.

Rethinking the Power of Words

“I brushed it aside,” Altman admitted, “but now I find myself awake in the middle of the night, frustrated, realizing I underestimated the impact of narratives.”

About the Investigative Article

The article in question was a comprehensive investigation by Ronan Farrow, known for his Pulitzer-winning work on the Harvey Weinstein scandal, and Andrew Marantz, a noted technology and politics journalist. They reported that over 100 individuals familiar with Altman’s business interactions described him as possessing an exceptional “will to power” that sets him apart even among high-profile industrialists.

Concerns About Trustworthiness

Farrow and Marantz echoed sentiments from prior journalists who have examined Altman’s character. One anonymous board member remarked that Altman combines a strong desire for approval with a troubling disregard for the repercussions of deceit.

Altman’s Reflections on Leadership

In response to the backlash, Altman reflected on his career, acknowledging both his accomplishments and his missteps. He specifically cited a tendency to avoid conflict, which he believes has led to significant challenges for him and OpenAI.

Addressing Past Mistakes

He expressed regret over “handling disagreements poorly” with OpenAI’s previous board, which resulted in considerable turmoil for the organization. “I am not proud of how I navigated that situation,” he remarked, alluding to his controversial reinstatement as CEO in 2023 after being removed.

The Need for Change in AI Dynamics

Altman recognized the dramatic tensions within the AI field, attributing them to what he termed a “ring of power” dynamic that drives individuals to irrational behavior. He asserted that while AGI itself is not the “ring,” the obsessive pursuit of control over it can lead organizations astray.

A Vision for Cooperative Progress

His solution proposes a shift towards sharing AI technology widely, ensuring that no single entity holds dominion over it. “There’s a way to move forward without anyone claiming the ring,” he stated.

Call for Constructive Discourse

Concluding his remarks, Altman extended an invitation for open, good-faith criticism and constructive discussion, reiterating his belief in technology’s potential to vastly improve our futures.

“As we engage in this discourse, we must curb the inflammatory rhetoric and strive to minimize conflict, both figuratively and literally,” he urged.

Here are five FAQs addressing the situation involving Sam Altman and the New Yorker article:

FAQ 1: What incident prompted Sam Altman to respond?

Answer: Sam Altman responded to a New Yorker article that he found incendiary after experiencing an attack on his home. The article’s portrayal of the incident and its implications prompted his public address.

FAQ 2: What were Altman’s main concerns about the New Yorker article?

Answer: Altman expressed concerns that the article misrepresented the facts surrounding the attack, potentially inciting further division or violence. He emphasized the need for responsible journalism, especially in sensitive contexts.

FAQ 3: How did Altman react to the attack on his home?

Answer: Altman described the experience as deeply unsettling. He highlighted the importance of discussing the safety and privacy of individuals in the public eye, particularly in the tech industry.

FAQ 4: What broader issues did Altman address in his response?

Answer: In his response, Altman touched on the broader societal implications of media narratives, including how they can influence public perception and behavior. He called for a more careful approach to reporting on individuals and events.

FAQ 5: How has Altman’s status in the tech community affected the scrutiny he faces?

Answer: As a prominent figure in the tech community, Altman faces heightened scrutiny and media attention. This situation illustrates the challenges that public figures navigate regarding personal safety and public discourse in the digital age.