With the rapid advancements in technology, Artificial Intelligence (AI) applications have become widespread, impacting various aspects of human life significantly, from natural language processing to autonomous vehicles. This progress has led to an increase in energy demands in data centers that power these AI workloads.

The growth of AI tasks has transformed data centers into facilities for training neural networks, running simulations, and supporting real-time inference. As AI algorithms continue to evolve, the demand for computational power increases, straining existing infrastructure and posing challenges in power management and energy efficiency.

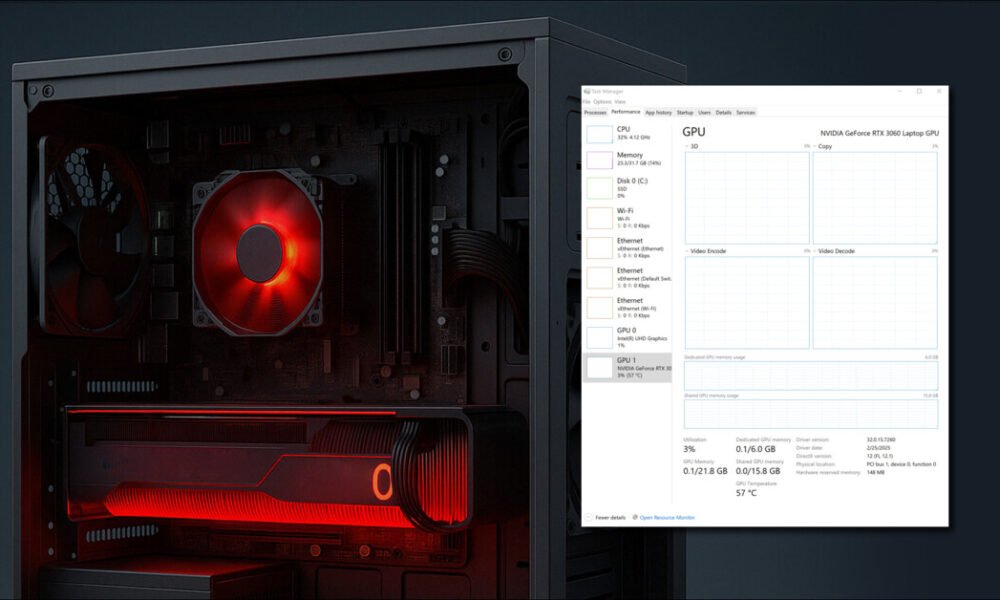

This exponential growth in AI applications puts a strain on cooling systems, as they struggle to dissipate the heat generated by high-performance GPUs, leading to increased electricity usage. Achieving a balance between technological progress and environmental responsibility is crucial. As AI innovation accelerates, it is essential to ensure that each advancement contributes to scientific growth and a sustainable future.

The Impact of AI on Data Center Power and Sustainability

According to the International Energy Agency (IEA), data centers consumed approximately 460 terawatt-hours (TWh) of electricity globally in 2022, with projections to surpass 1,000 TWh by 2026. This increase poses challenges for energy grids, emphasizing the need for efficiency improvements and regulatory measures.

AI has been transforming data centers, shifting them from handling predictable workloads to dynamic tasks like machine learning training and real-time analytics. This shift requires flexibility and scalability, with AI improving efficiency by predicting loads, optimizing resources, and reducing energy waste. It also aids in discovering new materials, optimizing renewable energy, and managing energy storage systems.

To strike a balance, data centers must harness the potential of AI while minimizing its energy impact. Collaboration among stakeholders is crucial to creating a sustainable future where AI innovation and responsible energy use go hand in hand.

The Role of GPU Data Centers in AI Innovation

In the age of AI, GPU data centers play a vital role in driving progress across various industries. Equipped with high-performance GPUs that excel at accelerating AI workloads through parallel processing, these specialized facilities are instrumental in advancing AI tasks.

Unlike traditional CPUs, GPUs have numerous cores that can handle complex calculations simultaneously, making them ideal for tasks like deep learning and neural network training. Their parallel processing power ensures exceptional speed when training AI models on vast datasets. Additionally, GPUs excel at executing matrix operations, a fundamental requirement for many AI algorithms, thanks to their optimized architecture for parallel matrix computations.

As AI models become more intricate, GPUs offer scalability by efficiently distributing computations across their cores, ensuring effective training processes. The increase in AI applications highlights the importance of robust hardware solutions like GPUs to meet the growing computational demands. GPUs are instrumental in model training and inference, leveraging their parallel processing capabilities for real-time predictions and analyses.

In various industries, GPU data centers drive transformative changes, enhancing medical imaging processes in healthcare, optimizing decision-making processes in finance, and enabling advancements in autonomous vehicles by facilitating real-time navigation and decision-making.

Furthermore, the proliferation of generative AI applications, such as Generative Adversarial Networks (GANs), adds complexity to the energy equation. These models, used for content creation and design, demand extensive training cycles, leading to increased energy consumption in data centers. Responsible deployment of AI technologies is vital in mitigating the environmental impact of data center operations, requiring organizations to prioritize energy efficiency and sustainability.

Energy-Efficient Computing for AI

GPUs are powerful tools that save energy by processing tasks faster, reducing overall power usage. Compared to regular CPUs, GPUs perform better per watt, especially in large-scale AI projects. Their efficient collaboration minimizes energy consumption, making them cost-effective in the long run.

Specialized GPU libraries further enhance energy efficiency by optimizing common AI tasks using GPUs’ parallel architecture for high performance without wasting energy. Although GPUs have a higher initial cost, their long-term benefits, including positively impacting the total cost of Ownership (TCO), justify the investment.

Additionally, GPU-based systems can scale up without significantly increasing energy use. Cloud providers offer pay-as-you-go GPU instances, enabling researchers to access resources as needed while keeping costs low. This flexibility optimizes performance and expenses in AI work.

Collaborative Efforts and Industry Responses

Collaborative efforts and industry responses are essential for addressing energy consumption challenges in data centers, particularly concerning AI workloads and grid stability.

Industry bodies like the Green Grid and the EPA promote energy-efficient practices, with initiatives like the Energy Star certification driving adherence to standards.

Leading data center operators like Google and Microsoft invest in renewable energy sources and collaborate with utilities to integrate clean energy into their grids.

Efforts to improve cooling systems and repurpose waste heat are ongoing, supported by initiatives like Facebook’s Open Compute Project.

In AI innovation, collaboration through demand response programs is crucial for efficiently managing energy consumption during peak hours. These initiatives also promote edge computing and distributed AI processing, reducing reliance on long-distance data transmission and saving energy.

Future Outlook

As AI applications continue to grow across various industries, the demand for data center resources will increase. Collaborative efforts among researchers, industry leaders, and policymakers are essential for driving innovation in energy-efficient hardware and software solutions to meet these challenges.

Continued innovation in energy-efficient computing is vital to address the rising demand for data center resources. Prioritizing energy efficiency in data center operations and investing in AI-specific hardware like AI accelerators will shape the future of sustainable data centers.

Balancing AI advancement with sustainable energy practices is crucial, requiring responsible AI deployment through collective action to minimize the environmental impact. Aligning AI progress with environmental stewardship can create a greener digital ecosystem benefiting society and the planet.

Conclusion

As AI continues to revolutionize industries, the increasing energy demands of data centers present significant challenges. However, collaborative efforts, investments in energy-efficient computing solutions like GPUs, and a commitment to sustainable practices offer promising pathways forward.

Prioritizing energy efficiency, embracing responsible AI deployment, and fostering collective actions can help achieve a balance between technological advancement and environmental stewardship, ensuring a sustainable digital future for generations to come.

GPU data centers require large amounts of electricity to power the high-performance graphics processing units used for AI innovation. This strains the power grids due to the increased energy demand.

GPU data centers can balance AI innovation and energy consumption by implementing energy-efficient practices, such as using renewable energy sources, optimizing cooling systems, and adopting power management technologies.

AI innovation can be sustained without straining power grids by improving the energy efficiency of GPU data centers, investing in renewable energy sources, and promoting energy conservation practices.