Microsoft Reassures Customers: Anthropic’s AI Models Remain Accessible Amid Department of Defense Controversy

Enterprises and startups utilizing Anthropic Claude via Microsoft products need not worry about losing access to the model, as Microsoft confirms its continued availability.

Microsoft’s Commitment to Anthropic Models

In a significant move, Microsoft has become the first major tech firm to guarantee that customers of Anthropic’s AI models will still have access, despite escalated tensions with the U.S. Department of Defense.

Department of Defense Designates Anthropic as Supply Chain Risk

The Defense Department has labeled the AI startup as a supply chain risk following its refusal to grant unrestricted access to its technology for contentious applications, including mass surveillance and autonomous weaponry.

Implications of the Supply Chain Risk Designation

This designation, typically applied to foreign adversaries, limits Pentagon access to Anthropic’s products. It mandates that any associated companies must verify they do not use Anthropic’s models, prompting the company to announce plans to contest the designation legally.

Microsoft’s Assurance to Customers

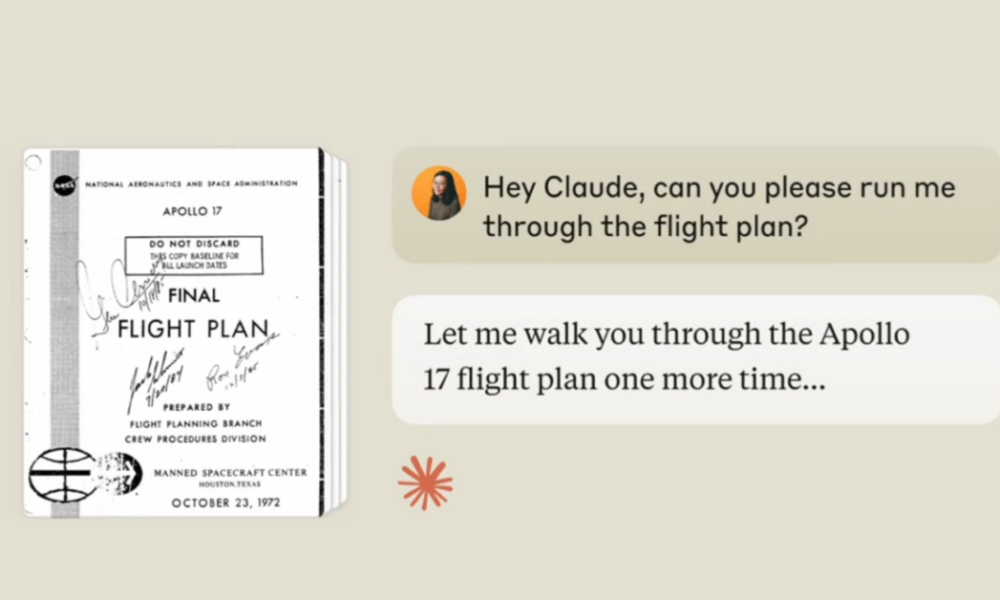

Microsoft, which provides a range of products to federal agencies, including the Defense Department, has stated that Anthropic’s models will remain available to all its customers except those directly contracted with the Pentagon. A spokesperson noted, “Our legal team has determined that Anthropic products, including Claude, can continue to be offered to our customers through platforms like M365, GitHub, and Microsoft’s AI Foundry.”

CEO Dario Amodei’s Stance on the Designation

This assurance aligns with the sentiments expressed by Anthropic CEO Dario Amodei, who emphasized that the designation applies solely to the use of Claude in direct contracts with the Department of Defense and does not impose restrictions on other contractual relationships.

Ongoing Growth for Claude Despite Challenges

Despite the Department of Defense’s pressures, Claude’s consumer growth is thriving following Anthropic’s resistance to the Pentagon’s demands.

Join us at the TechCrunch Event

San Francisco, CA

|

October 13-15, 2026

Here are five FAQs regarding the availability of Anthropic Claude:

FAQ 1: What is Anthropic Claude?

Answer: Anthropic Claude is an advanced AI language model developed by Anthropic, designed to assist users with a wide range of tasks, including natural language understanding and generation.

FAQ 2: Who can access Anthropic Claude?

Answer: Anthropic Claude is available to most customers, including businesses and organizations, but it is specifically unavailable to the U.S. Department of Defense.

FAQ 3: Why is Anthropic Claude not available to the Defense Department?

Answer: The specifics behind the decision to restrict access to the Defense Department have not been publicly disclosed. Such decisions are often made based on ethical considerations or company policies regarding government relationships.

FAQ 4: Are there any alternatives for Department of Defense personnel?

Answer: Yes, personnel at the Department of Defense can explore other AI models or solutions that are available for governmental use, depending on regulatory and operational requirements.

FAQ 5: How can I use Anthropic Claude for my organization?

Answer: Organizations can access Anthropic Claude by signing up for their services directly through Anthropic’s website or authorized partners, ensuring they meet any necessary compliance and usage guidelines.