Nvidia’s GTC Conference: A Glimpse into the Future of Tech

Nvidia’s GTC conference showcased a wealth of innovative technologies and ambitious goals, featuring trillion-dollar sales projections, groundbreaking graphics technology capable of enhancing video games, and the bold assertion that every firm needs an OpenClaw strategy. The event even featured an amusing robot version of Olaf from Disney’s “Frozen.”

Recapping Jensen Huang’s Keynote

In a recent episode of the Equity podcast, TechCrunch’s Kirsten Korosec, Sean O’Kane, and I analyzed CEO Jensen Huang’s keynote and its implications for Nvidia’s future. Naturally, Olaf’s antics were a hot topic, especially when his microphone had to be silenced due to excessive chatter.

Engineering vs. Social Challenges

Even if the demo had gone perfectly, Sean expressed skepticism about the focus on “engineering challenges” rather than addressing the “messy gray areas” of social implications.

“What happens when a kid kicks Olaf over?” Sean questioned. “Every child witnessing that could have their Disney experience ruined, impacting the brand negatively.”

Insights from the Podcast Discussion

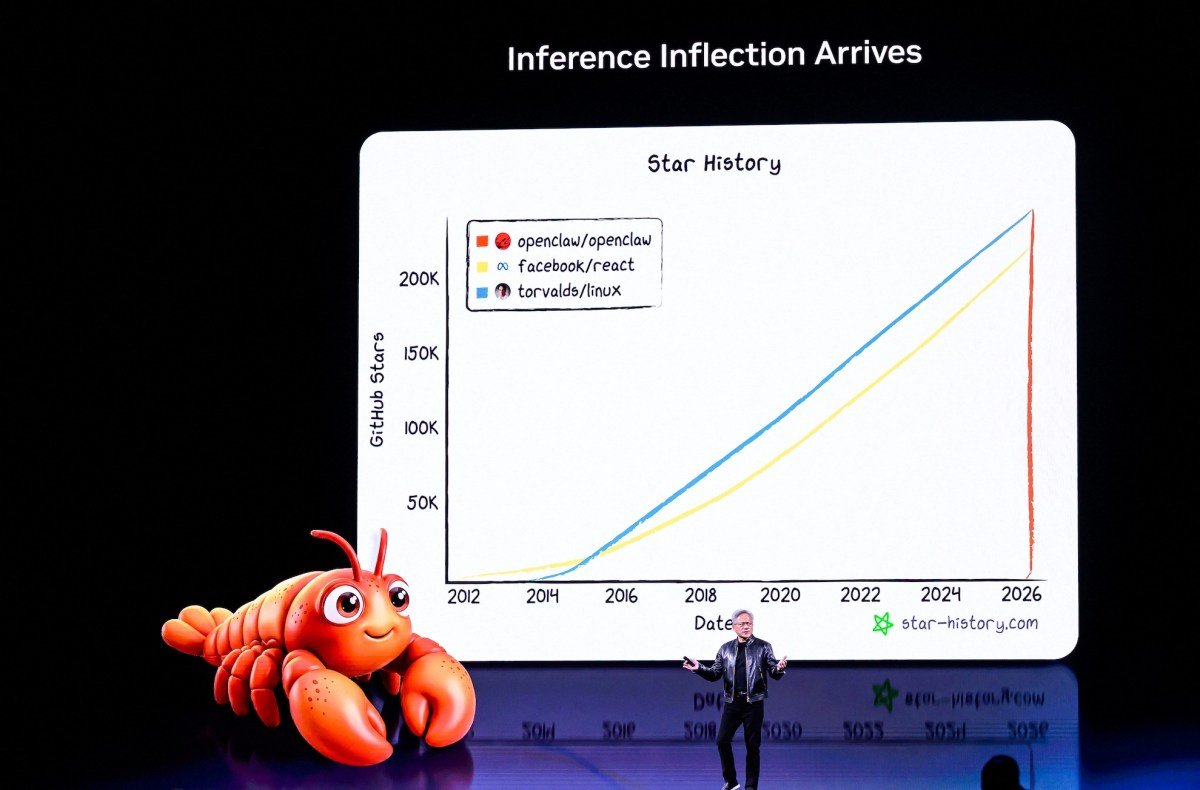

Anthony: “[CEO Jensen Huang] emphasizes that every company should adopt an OpenClaw strategy. This is a compelling statement, particularly as OpenClaw evolves at this pivotal moment.”

With the founder now at OpenAI, OpenClaw could either thrive as an open-source project or stagnate. Nvidia’s investment could foster its growth, but only time will tell if this initiative gains traction.

TechCrunch Event

San Francisco, CA

|

October 13-15, 2026

Evaluating NemoClaw and Its Impact

Kirsten: “For Nvidia, launching NemoClaw incurs virtually no cost, but inaction carries greater risk. Jensen’s assertion that every enterprise needs an OpenClaw strategy signals Nvidia’s need for solutions that allow it to integrate into other companies.”

The Sky’s the Limit with Robotics

Sean: “We haven’t even discussed what could propel Nvidia to become the first $100 trillion company: a robot Olaf.”

Anthony: “How could I forget?”

Kirsten: “Just make sure to catch the end of the two-and-a-half-hour presentation.”

During the demo featuring Olaf, Jensen showcased Nvidia’s robotics technology. It was unclear whether Olaf’s speech was spontaneous or pre-programmed. Ultimately, the microphone was cut when Olaf began rambling post-presentation.

Sean: “Next step: give Olaf a wheelbase, and I know just the entrepreneur for the job.”

While these technology presentations can be whimsical, they also raise important engineering and integration questions. They are often framed as future attractions for Disney parks, enticing visitors to interact with characters like Olaf.

Social Implications and Job Creation

Yet, the rollout of such technology lacks adequate consideration of the social ramifications. A notable YouTuber, Defunctland, has produced a comprehensive video on Disney’s efforts to integrate robotics into their parks.

As we marvel at the impressive engineering, the primary question remains: What happens if a child disrupts Olaf? This scenario could tarnish the Disney experience for others and damage the brand.

Exploring these social dimensions is crucial, particularly as we navigate the hype surrounding humanoid robotics. While there’s excitement about engineering feats, the societal integration of these technologies is often overlooked.

Kirsten: “Let’s not forget, Olaf will require a human ‘babysitter’ at Disneyland, likely dressed as Elsa, creating job opportunities in the process.”

Sure! Here are five FAQs with answers based on the theme "Do you want to build a robot snowman?"

FAQ 1: What materials do I need to build a robot snowman?

Answer: To build a robot snowman, you’ll need materials like snow (or a snow-like substitute), various spare parts (like buttons, lights, and wires), a sturdy base (like a plastic or wooden platform), and tools for assembly. Don’t forget some decorations for personality!

FAQ 2: Is it difficult to build a robot snowman?

Answer: The difficulty level varies based on your design and materials. For a simple version, it can be quite easy and fun! However, adding complex features like movement or sensors may require some technical skills and knowledge in electronics.

FAQ 3: Can I incorporate technology into my robot snowman?

Answer: Absolutely! You can include basic circuits, sensors, or even a small motor to make your snowman light up, make sounds, or move. Using programmable components like Arduino can elevate your project and make it more interactive.

FAQ 4: How can I make my robot snowman weather-resistant?

Answer: To ensure your robot snowman can withstand the elements, use waterproof materials for electronic components. Encasing circuits in protective housing and using moisture-resistant decorations will help it endure outdoor conditions better.

FAQ 5: What are some creative decoration ideas for my robot snowman?

Answer: Get creative by using items like LED lights for a glowing effect, colored buttons for eyes, scarves made from fabric scraps, and even recycled items like bottle caps for a whimsical touch. Personalize it with unique features like a top hat or quirky accessories!